In Today's Issue:

🚀 NVIDIA's Vera Rubin + Groq 3 LPX: $20B bet on inference chips, 10x power efficiency

🐍 OpenAI acquires Astral (Ruff/uv) to own the Python dev stack end-to-end

📊 AI spending officially flips: 80% inference, 20% training by EOY 2026

💾 AMD doubles down with Samsung on HBM4 for MI455X

📸 Shutterstock expands AI training datasets to meet "critical mass" demand

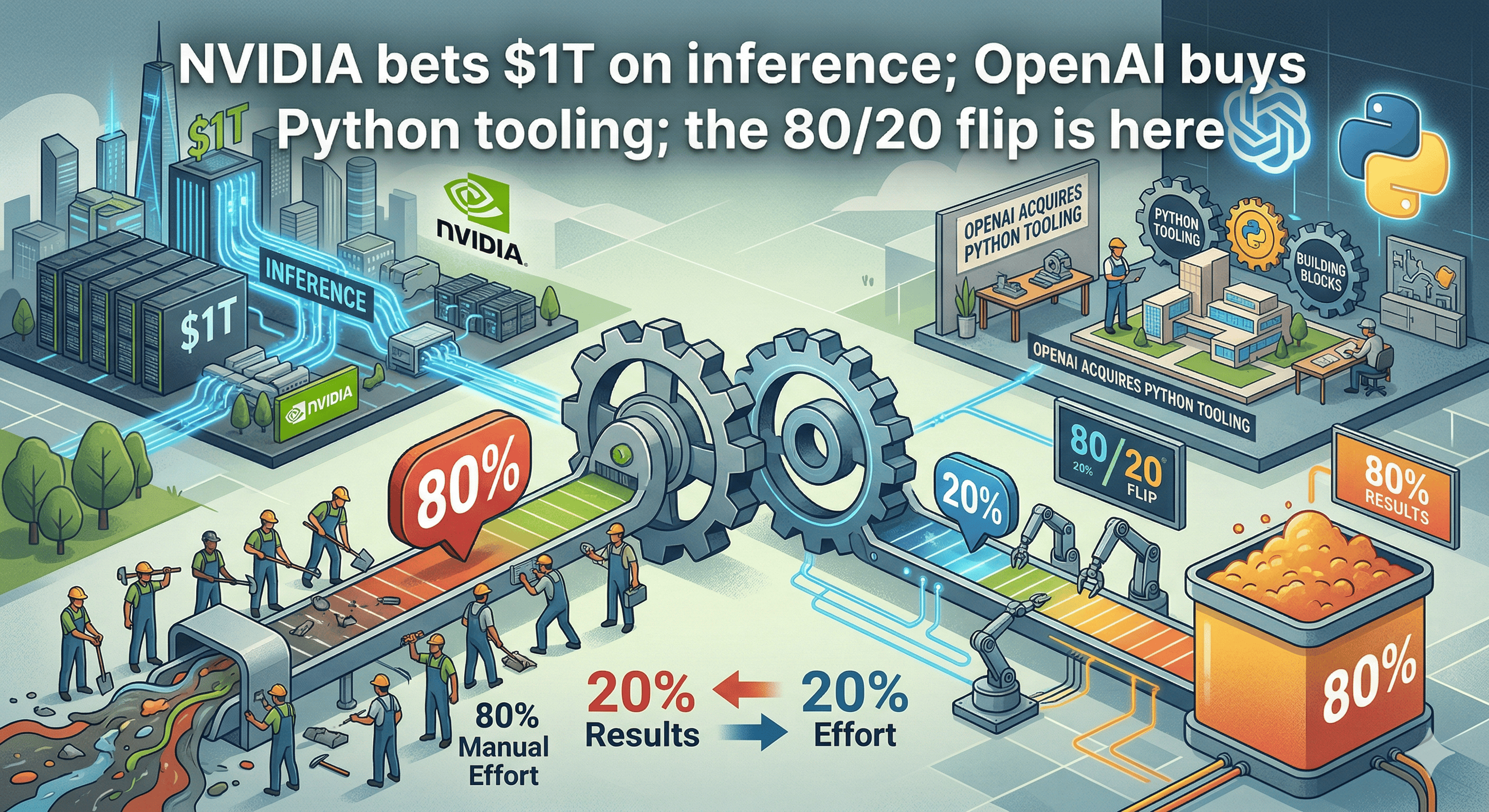

The narrative: Training was the hype. Inference is the business.

🚀 NVIDIA's $1 Trillion Inference Play

NVIDIA GTC 2026 wrapped with Jensen Huang unveiling Vera Rubin — 10x power efficiency over Grace Blackwell. The headline? A $20B licensing deal with Groq to produce the Groq 3 LPX (SRAM-based inference accelerator, shipping Q3 2026 on Samsung's 4nm process).

Jensen's message: "Tokens are the new unit of value. Data centers are revenue factories."

NVIDIA is forecasting $1 trillion in AI chip revenue between 2025-2027, almost entirely on inference infrastructure. They also launched Dynamo 1.0, an open-source orchestration layer that delivered a 7x inference performance boost for Blackwell GPUs.

Cisco, IBM, and Google Cloud are all expanding NVIDIA inference partnerships.

The takeaway: This is the official coronation of inference as the primary revenue driver. If you're still spec'ing training clusters while your inference stack runs on duct tape and prayers, you're building backwards. The money is in serving tokens, not training them.

🐍 OpenAI Buys Python (Sort Of)

OpenAI acquired Astral — the team behind Python's fastest linter (Ruff) and package manager (uv). They're integrating these tools directly into Codex to "enable AI systems to participate more comprehensively in the entire software development workflow."

This isn't just an acqui-hire. It's OpenAI building vertical integration into developer tooling. They're not content to be "the best model" — they want to own the entire stack from code generation to dependency management to execution.

Anthropic (Claude Code) just got put on notice.

The takeaway: Whoever controls the tooling controls the workflow. If your AI coding agent doesn't understand your dependency graph, linting rules, and runtime environment, you're shipping half-baked code. This move is about eliminating friction and locking developers into the OpenAI ecosystem.

📊 The Great Inversion: 80% Inference, 20% Training

Multiple reports confirm the spending flip:

Lenovo's CEO: 80% inference, 20% training by EOY 2026

Gartner: 55% of AI IaaS spending is already inference, rising to 65% by 2029

Deloitte: 66.7% of AI compute this year is inference

One analysis: inference now represents 80-90% of a production AI system's lifetime cost

The AI industry just admitted training is a one-time cost and inference is the recurring revenue engine. Enterprises aren't experimenting anymore — they're deploying. That means continuous compute, edge deployments, hybrid infrastructure, and latency optimization.

Training got the headlines. Inference gets the budget.

The takeaway: If your infrastructure strategy is still "buy GPUs for training and figure out inference later," you're about to get crushed by OpEx. Inference economics are fundamentally different: lower margins, higher utilization requirements, latency SLAs, token-level cost tracking. The companies that figure this out first will dominate the next decade.

Want to model your inference costs? Try our AI Infrastructure Calculator →

💾 AMD + Samsung: HBM4 for the MI455X

AMD and Samsung signed an MOU positioning Samsung as the primary HBM4 supplier for AMD's next-gen Instinct MI455X GPU. They're also optimizing DDR5 for AMD's 6th Gen Epyc CPUs.

AMD expanded its partnership with Flex for US-based manufacturing of the MI355X platform and announced strategic AI infrastructure deals in South Korea with Upstage and NAVER Cloud.

AMD is diversifying away from NVIDIA's supply chain dominance by locking down memory early and building domestic manufacturing. HBM4 will be critical for inference workloads that are memory-bound rather than compute-bound. This is AMD betting on bandwidth over raw TFLOPS— the right call for inference.

The takeaway: Memory bandwidth is the new bottleneck. If you're running inference on models larger than your GPU's VRAM, you're paying for latency in milliseconds and dollars in wasted compute. AMD is positioning the MI455X as the inference-first alternative to NVIDIA's Vera Rubin. Smart money is on heterogeneous deployments: train on NVIDIA, infer on AMD.

📸 Shutterstock Goes All-In on Training Data

Shutterstock announced a "major expansion" of its licensed training datasets, providing developers and enterprises with multimodal content (images, video, audio) for the "full model training lifecycle."

The company called out "accelerating demand for diverse and rights-cleared data as generative AI development reaches critical mass globally."

Training data is becoming a licensed commodity. As synthetic data hits quality ceilings and web scraping faces legal challenges, companies like Shutterstock are positioning as the "AWS of training data" — pay per use, fully licensed, curated for quality.

The takeaway: If you're training models on unlicensed data in 2026, you're building on legal quicksand. The days of "crawl everything and apologize later" are ending. Expect more lawsuits, more licensing deals, and more enterprises demanding provenance for every training sample. Data licensing will be a line item in your AI budget.

That's it for today. If this was useful, forward it to someone making AI infrastructure decisions.

ClusterMind News

Every headline satisfies an opinion. Except ours.

Remember when the news was about what happened, not how to feel about it? 1440's Daily Digest is bringing that back. Every morning, they sift through 100+ sources to deliver a concise, unbiased briefing — no pundits, no paywalls, no politics. Just the facts, all in five minutes. For free.